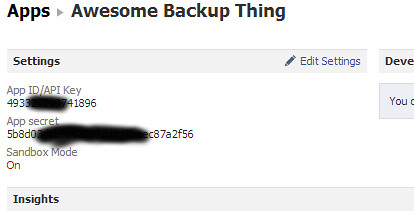

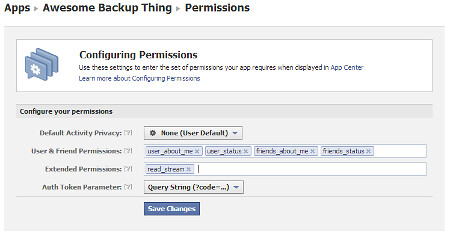

In my previous post, I talked about how I’m using a Raspberry Pi to run a Facebook backup service and provided the Python code needed to get (and maintain) a valid Facebook token to do this. This post will be discussing the actual Facebook backup service and the Python code to do that. It will be my second Python program ever (the first was in the previous post), so there will likely be better ways to do what I’ve done, although you’ll see it’s still a pretty simple exercise. (I’m happy to hear about possible improvements.)

In my previous post, I talked about how I’m using a Raspberry Pi to run a Facebook backup service and provided the Python code needed to get (and maintain) a valid Facebook token to do this. This post will be discussing the actual Facebook backup service and the Python code to do that. It will be my second Python program ever (the first was in the previous post), so there will likely be better ways to do what I’ve done, although you’ll see it’s still a pretty simple exercise. (I’m happy to hear about possible improvements.)

The first thing I need to do is pull in all the Python modules that will be useful. The extra packages should’ve been installed from before. Also, because the Facebook messages will be backed-up to Gmail using its IMAP interface, the Google credentials are specified here, too. Given that those credentials are likely to be something you want to keep secret at all costs, all the more reason to run this on a home server rather than on a publicly hosted server.

from facepy import GraphAPI

import urlparse

import dateutil.parser

from crontab import CronTab

import imaplib

import time

# How many status updates to go back in time (first time, and between runs)

MAX_ITEMS = 5000

# How many items to ask for in each request

REQUEST_ITEMS = 25

# Default recipient

DEFAULT_TO = "my_gmail_acct@gmail.com" # Replace with yours

# Suffix to turn Facebook message IDs into email IDs

ID_SUFFIX = "@exportfbfeed.facebook.com"

# Gmail account

GMAIL_USER = "my_gmail_acct@gmail.com" # Replace with yours

# and its secret password

GMAIL_PASS = "S3CR3TC0D3" # Replace with yours

# Gmail folder to use (will be created if necessary)

GMAIL_FOLDER = "Facebook"

Before we get into the guts of the backup service, I first need to create a few basic functions to simplify the code that comes later. Initially, there’s a function that is used to make it easy to pull a value from the results of a call to the Facebook Graph API:

def lookupkey(the_list, the_key, the_default):

try:

return the_list[the_key]

except KeyError:

return the_default

Next a function to retrieve the Facebook username for a given Facebook user. Given that we want to back-up messages into Gmail, we have to make them look like email. So, each message will have to appear to come from a unique email address belonging to the relevant Facebook user. Since Facebook generally provides all their users with email addresses at the facebook.com domain based on their usernames, I’ve used these. However, to make it a bit more efficient, I cache the usernames in a list so that I don’t have to query Facebook again when the same person appears in the feed multiple times.

def getusername(id, friendlist):

uname = lookupkey(friendlist, id, '')

if '' == uname:

uname = lookupkey(graph.get(str(id)), 'username', id)

friendlist[id] = uname # Add the entry to the dictionary for next time

return uname

The email standards expect times and dates to appear in particular formats, so now a function to achieve this based on whatever date format Facebook gives us:

def getnormaldate(funnydate):

dt = dateutil.parser.parse(funnydate)

tz = long(dt.utcoffset().total_seconds()) / 60

tzHH = str(tz / 60).zfill(2)

if 0 <= tz:

tzHH = '+' + tzHH

tzMM = str(tz % 60).zfill(2)

return dt.strftime("%a, %d %b %Y %I:%M:%S") + ' ' + tzHH + tzMM

Next, a function to find the relevant bit of a URL to help travel back and forth in the Facebook feed. Given that the feed is returned to use from the Graph API in small chunks, we need to know how to query the next or previous chunk in order to get it all. Facebook uses a URL format to give us this information, but I want to unpack it to allow for more targeted navigation.

def getpagingpart(urlstring, part):

url = urlparse.urlsplit(urlstring)

qs = urlparse.parse_qs(url.query)

return qs[part][0]

Now a function to construct the headers and body of the email from a range of information gleaned from processing the Facebook Graph API results.

def message2str(fromname, fromaddr, toname, toaddr, date, subj1, subj2, msgid, msg1, msg2, inreplyto=''):

if '' == inreplyto:

header = ''

else:

header = 'In-Reply-To: <' + inreplyto + '>\n'

utcdate = dateutil.parser.parse(date).astimezone(dateutil.tz.tzutc()).strftime("%a %b %d %I:%M:%S %Y")

return "From nobody {}\nFrom: {} <{}>\nTo: {} <{}>\nDate: {}\nSubject: {} - {}\nMessage-ID: <{}>\n{}Content-Type: text/html\n\n{}{}

".format(utcdate, fromname, fromaddr, toname, toaddr, date, subj1, subj2, msgid, header, msg1, msg2)

Okay, now we've gotten all that out of the way, here's the main function to process a message obtained from the Graph API and place it in an IMAP message folder. The Facebook message is in the form of a dictionary, so we can look up the relevant parts by using keys. In particular, any comments to a message will appear in the same format, so we recurse over those as well using the same function.

Note that in a couple of places I call encode("ascii", "ignore"). This is an ugly hack that strips out all of the unicode goodness that was in the original Facebook message (which allows foreign language characters and symbols), dropping anything exotic to leave plain ASCII characters behind. However, for some reason, the Python installation on my Raspberry Pi would crash the program whenever it came across unusual characters. To ensure that everything works smoothly, I ensure that these aren't present when the text is processed later.

def printdata(data, friendlist, replytoid='', replytosub='', max=MAX_ITEMS, conn=None):

c = 0

for d in data:

id = lookupkey(d, 'id', '') # get the id of the post

msgid = id + ID_SUFFIX

try: # get the name (and id) of the friend who posted it

f = d['from']

n = f['name'].encode("ascii", "ignore")

fid = f['id']

uname = getusername(fid, friendlist) + "@facebook.com"

except KeyError:

n = ''

fid = ''

uname = ''

try: # get the recipient (eg. if a wall post)

dest = d['to']

destn = dest['name']

destid = dest['id']

destname = getusername(destid, friendlist) + "@facebook.com"

except KeyError:

destn = ''

destid = ''

destname = DEFAULT_TO

t = lookupkey(d, 'type', '') # get the type of this post

try:

st = d['status_type']

t += " " + st

except KeyError:

pass

try: # get the message they posted

msg = d['message'].encode("ascii", "ignore")

except KeyError:

msg = ''

try: # there may also be a description

desc = d['description'].encode("ascii", "ignore")

if '' == msg:

msg = desc

else:

msg = msg + "

\n" + desc

except KeyError:

pass

try: # get an associated image

img = d['picture']

msg = msg + '

\n '

except KeyError:

img = ''

try: # get link details if they exist

ln = d['link']

ln = '

'

except KeyError:

img = ''

try: # get link details if they exist

ln = d['link']

ln = '

\nlink'

except KeyError:

ln = ''

try: # get the date

date = d['created_time']

date = getnormaldate(date)

except KeyError:

date = ''

if '' == msg:

continue

if '' == replytoid:

email = message2str(n, uname, destn, destname, date, t, id, msgid, msg, ln)

else:

email = message2str(n, uname, destn, destname, date, 'Re: ' + replytosub, replytoid, msgid, msg, ln, replytoid + ID_SUFFIX)

if conn:

conn.append(GMAIL_FOLDER, "", time.time(), email)

else:

print email

print "----------"

try: # process comments if there are any

comments = d['comments']

commentdata = comments['data']

printdata(commentdata, friendlist, replytoid=id, replytosub=t, conn=conn)

except KeyError:

pass

c += 1

if c == max:

break

return c

The last bit of the program uses these functions to perform the backup and to set up a cron job to run the program again every hour. Here's how it works..

First, I grab the Facebook Graph API token that the previous program (setupfbtoken.py) provided, and initialise the module that will be used to query it.

# Initialise the Graph API with a valid access token

try:

with open("fbtoken.txt", "r") as f:

oauth_access_token = f.read()

except IOError:

print 'Run setupfbtoken.py first'

exit(-1)

# See https://developers.facebook.com/docs/reference/api/user/

graph = GraphAPI(oauth_access_token)

Next, I set up the connection to Gmail that will be used to store the messages using the credentials from before.

# Setup mail connection

mailconnection = imaplib.IMAP4_SSL('imap.gmail.com')

mailconnection.login(GMAIL_USER, GMAIL_PASS)

mailconnection.create(GMAIL_FOLDER)

Now we just need to initialise some things that will be used in the main loop: the cache of the Facebook usernames, the count of the number of status updates to read, and the timestamp that marks the point in time to begin reading status from. This last one is to ensure that we don't keep uploading the same messages again and again, and the timestamp is kept in the file fbtimestamp.txt.

friendlist = {}

countdown = MAX_ITEMS

try:

with open("fbtimestamp.txt", "r") as f:

since = '&since=' + f.read()

except IOError:

since = ''

Now we do the actual work, reading the status feed and processing them:

stream = graph.get('me/home?limit=' + str(REQUEST_ITEMS) + since)

newsince = ''

while stream and 0 < countdown:

streamdata = stream['data']

numitems = printdata(streamdata, friendlist, max=countdown, conn=mailconnection)

if 0 == numitems:

break;

countdown -= numitems

try: # get the link to ask for next (going back in time another step)

p = stream['paging']

next = p['next']

if '' == newsince:

try:

prev = p['previous']

newsince = getpagingpart(prev, 'since')

except KeyError:

pass

except KeyError:

break

until = '&until=' + getpagingpart(next, 'until')

stream = graph.get('me/home?limit=' + str(REQUEST_ITEMS) + since + until)

Now we clean things up: record the new timestamp and close the connection to Gmail.

if '' != newsince:

with open("fbtimestamp.txt", "w") as f:

f.write(newsince) # Record the new timestamp for next time

mailconnection.logout()

Finally, we set up a cron job to keep the status updates flowing. As you can probably guess from this code snippet, this all is meant to be saved in a file called exportfbfeed.py.

cron = CronTab() # get crontab for the current user

if [] == cron.find_comment("exportfbfeed"):

job = cron.new(command="python ~/exportfbfeed.py", comment="exportfbfeed")

job.minute.on(0) # run this script @hourly, on the hour

cron.write()

Alright. Well, that was a little longer than I thought it would be. However, the bit that does the actual work is not very big. (No sniggering, people. This is a family show.)

It's been interesting to see how stable the Raspberry Pi has been. While it wasn't designed to be a home server, it's been running fine for me for weeks.

There was an additional benefit to this backup service that I hadn't expected. Since all my email and Facebook messages are now in the one place, I can easily search the lot of them from a single query. In fact, the Facebook search feature isn't very extensive, so it's great that I can now do Google searches to look for things people have sent me via Facebook. It's been a pretty successful project for me and I'm glad I got the chance to play with a Raspberry Pi.

For those that want the original source code files, rather than cut-and-pasting from this blog, you can download them here:

If you end up using this for something, let me know!